The “Computer Vision with Deep Learning” module covers four main chapters: Introduction to Deep Learning, Convolutional Neural Networks (CNNs), Object Detection, and Image Segmentation. It also includes two NVIDIA workshops: Fundamentals of Deep Learning and Industrial Inspection with Computer Vision.

- Teacher: Noura Aboudi

- Teacher: Olfa Besbes

This course is a Template, please add your description and change the course photo.

- Teacher: Mohamed Amine Marzouk

This course introduces the use of cloud computing platforms to support machine learning workflows. It presents the basic principles of cloud infrastructure and how these services are used for storing data, running computations, and managing machine learning processes. The course covers topics such as cloud paradigms, cloud infrastructure, and data management in cloud environments. Practical sessions allow students to explore how machine learning tasks can be performed using cloud-based tools and services.

- Teacher: Ameny Rjiba

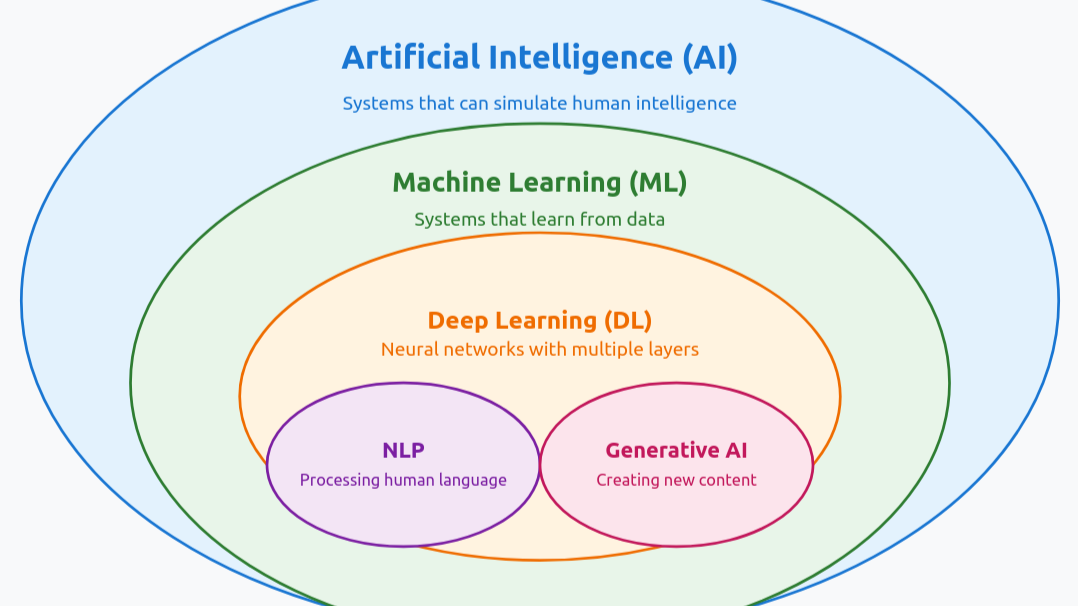

Natural language processing (NLP) or computational linguistics is one of the most important technologies of the information age. Applications of NLP are everywhere because people communicate almost everything in language. Deep learning approaches obtained very high performance across many different NLP tasks and through the scaling of Large Language Models, such as ChatGPT, further progress has been made. In this course, students will gain a thorough introduction to both the basics of Deep Learning for NLP and the latest cutting-edge research on Large Language Models (LLMs). Through lectures, assignments and 1 Nvidia workshop, students will learn the necessary skills to design, implement, and understand their own NLP and LLM models, using the different frameworks like Langchain, Pytorch, Huggingface...

- Teacher: Khouloud Chelbi

This course is a Template, please add your description and change the course photo.

- Teacher: Imen Ben Abdelwahed

This Big Data course introduces the fundamental concepts and architectures underlying the creation of distributed Big Data environments and the tools used to manage large-scale data.

It covers the Hadoop ecosystem, including HDFS and the MapReduce programming model, allowing students to simulate the behavior of Hadoop for distributed storage and parallel data processing.

The course also explores Apache Spark for efficient in-memory computation, with a focus on micro-batch processing using Spark Streaming.

Additionally, students apply machine learning techniques, particularly Decision Tree models, using Spark ML to analyze and process large datasets in distributed environments.

- Teacher: Rania Yangui